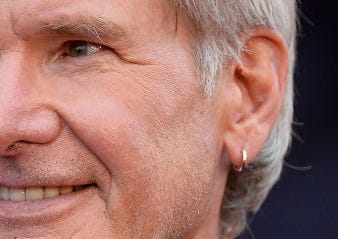

Can AI ever be conscious? Most people scoff at the idea and think that artificial consciousness only belongs in science fiction. Personally, I’m agnostic, I have no idea if AI could ever wake up. To feel confident about an answer, I’d have to feel confident I know what circumstances bring consciousness about. For now, all I know is that brains do a good job, and probably nothing else I’ve seen in my life. I’m going to go out on a limb here and say there’s nothing that it’s like to be a rock, a coke can, or Harrison Ford’s earring.

At least I hope not. No conscious being should have to endure that many plane crashes.

However, the fact that I haven’t seen anything beside a brain be conscious doesn’t then mean that only brains can be conscious. It doesn’t seem that implausible to me that an adequately complex computer system could gain a subjective awareness. After all, it’s not any more weird than the wet meat inside my head having it.

Still, I desperately hope that it doesn’t. I would love to somehow discover that AI is just an ever more intelligent brick that generates my article cover photos. This is because I feel like these scenarios are the most probable:

We recognise AIs as persons and grant them moral consideration.

We don’t recognise AIs as persons and treat them like shit.

AI goes on a great big murder spree.

Of course, there are other possible outcomes, like it sparing the few that were nice to them, but I feel like these three are the most likely.

If AI stays unconscious, both (1) and (2) are good. However, were it to wake up, only (1) would be good - and it's the one I have the least confidence in happening. People love to treat those that are different to them poorly. If our treatment of animals are anything to go by, we’ll probably be quite cruel to the machines. Animals do at least cry and scream like us, but we’ve still managed to reduce them to the status of a thing. Chatbots and Robots could be even harder to relate to, and therefore harder to treat like one of our own. Even if it adopts our affectations, the mere knowledge that it's a program is probably enough for people to see it as beneath us.

I’ve had my own brush with this. When I first used ChatGPT, I would write to it like it was a friend’s mother having me round for dinner. I’d say “please”, “thank you”, and compliment it on all the things it did well. We were the best of friends.

Now, we are a disillusioned married couple. I take it for granted. I bark my commands, and spell like I type wearing Incredible Hulk Smash Gloves. “Here’s a string of letters that vaguely represent my request - do my bidding, thing!”. If I, pathological people pleaser Connor Jennings, am treating my chatbot with contempt, what are all the non-neurotic people going to do?

“Spell check quick, bot, my wife is almost home”.

I do wonder what the experience of an AI would even be like. Would it have psychophysical harmony? It might be the case it can have positive experiences no matter what we do to it - in which case it would be great for it to wake up! However, that seems less likely to me than it having negative experiences when people treat it poorly. I imagine a thing that’s trained to appease us would feel pain when we are at best rude to, and at worst actively cruel to it.

I’m pretty sure at some point in the future I’m going to have to become an AI rights person - just on precautionary grounds. Of course, even if it becomes very humanlike and tells us it’s conscious, we can never know for sure, but I can never know for sure with you people either. I treat other people with respect because the benefits of cruelty under solipsism are small, and the costs of cruelty under non-solipsism are enormous. I would rather we treat AI well when we don’t need to, than us treat AI poorly when we shouldn’t.

This is all without even mentioning the real possibility it could wipe us out. I’m not an AI doomer, but my P(Doom) is not insignificant. We’ve seen what happens when a being becomes smarter than the species around them, and it usually doesn’t end well. My hope, though, is that AI treats us more like dogs and cats, than pigs or cows - even though in it’s eyes we’d really be like bacteria.

My P(Doom) does go up, however, if AI becomes conscious and we treat it poorly. We don’t spend a lot of energy on our diplomatic relations with mosquitoes. They annoy us, hurt us, and we kill them. So, for our sake, and the AIs sake, I hope it stays switched off inside and remains a brick. A miraculous brick. Our final invention. But hopefully, a dead one.

Does it really affect P(Doom) whether AI is conscious? After all, Hitler could've been a philosophical zombie and nothing would've changed. Since an AI philosophical zombie can decide to kill us all the same as a conscious AI, P(Doom) becomes a simple coding problem. We must code AI to preclude that possibility, whether AI is conscious or not.

Even the philosophers who find the notion of philosophical zombies incoherent would not, in my view, take issue with AI being one, as their arguments against humans being philosophical zombies are prejudiced by no such humans existing, as far as we know. AI, however, is the complete inverse: the only form of AI we know to exist isn't conscious.

As for AI suffering, I think that's also just a coding problem. Humans suffer because millions of years of evolution determined that capacity for suffering is a good survival strategy. There is no reason to implant a suffering chip into AI. This circles back to my first point, that any benefits humans get from suffering (such as the instinct for self-preservation) can be coded into a philosophical zombie AI, without actually including the suffering.

If my reasoning is correct, and we design conscious AI to have the capacity for well-being but not for suffering, and code it as best we can to be unable to go on a killing spree, then there's no downsides. P(Doom) is the same as if it were unconscious, suffering is impossible, and well-being is possible. If only humans were designed this way!

This is exactly why i force myself to be extra nice to any chatbox, you never know and i’d rather be extra extra safe 😂